A practical guide to negotiating AI vendor terms: data use, training limits, security, audit rights, and liability, without slowing procurement.

AI adoption is now routine. What is not routine is how most organisations buy AI. Many businesses still procure AI tools like ordinary software: click accept, sign an order form, and move on. In 2026, that approach creates avoidable risk. AI changes the procurement risk surface: data may be reused in unexpected ways, outputs may affect customers and employees, and models can change after signature.

Practical rule: AI risk starts before the first prompt, inside your contract.

Contents

- What changed in 2026 and why AI contracts matter more

- The AI procurement risk map

- The 12 clauses to demand

- Case example: AI support tool adoption

- Common mistakes companies make

- 30-minute contract review checklist

- 30-day implementation plan

- FAQ

1. What Changed in 2026 and Why AI Vendor Contracts Matter More

Three shifts make AI contracts materially different from standard SaaS procurement:

- AI is embedded into core operations. Support, marketing, finance, HR, fraud, and analytics workflows increasingly depend on AI features.

- Models update continuously. What you buy today can change next month, affecting accuracy, cost, and risk.

- Evidence expectations have increased. Partners and enterprise customers now ask for vendor terms, security posture, and governance controls as part of due diligence.

Helpful global references include the NIST AI Risk Management Framework and the NIST Privacy Framework.

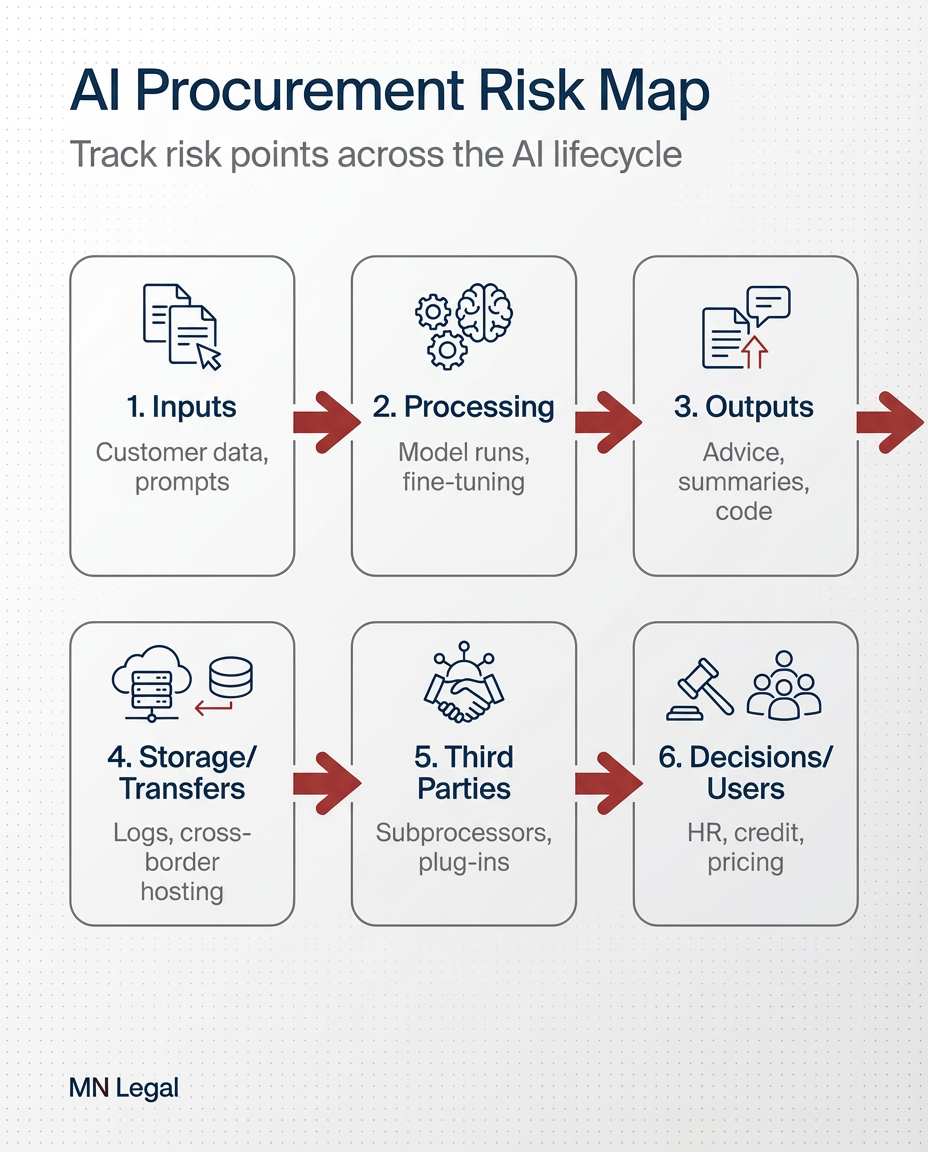

2. The AI Procurement Risk Map: What You Are Really Buying

Before negotiating clauses, align internally on what the tool actually does. Most procurement surprises happen because teams do not map data and decision pathways before signing.

Questions Your Team Should Answer Before Signing

- Inputs: What data goes in: customer tickets, IDs, HR data, financial data, call recordings?

- Outputs: What comes out: recommendations, replies, scores, summaries?

- Training: Does the vendor train on your content by default?

- Location: Where is data stored and processed? Are there cross-border processing concerns?

- Third parties: Which sub-processors or model providers are involved?

- Change control: Can the vendor materially change the model or terms without notice?

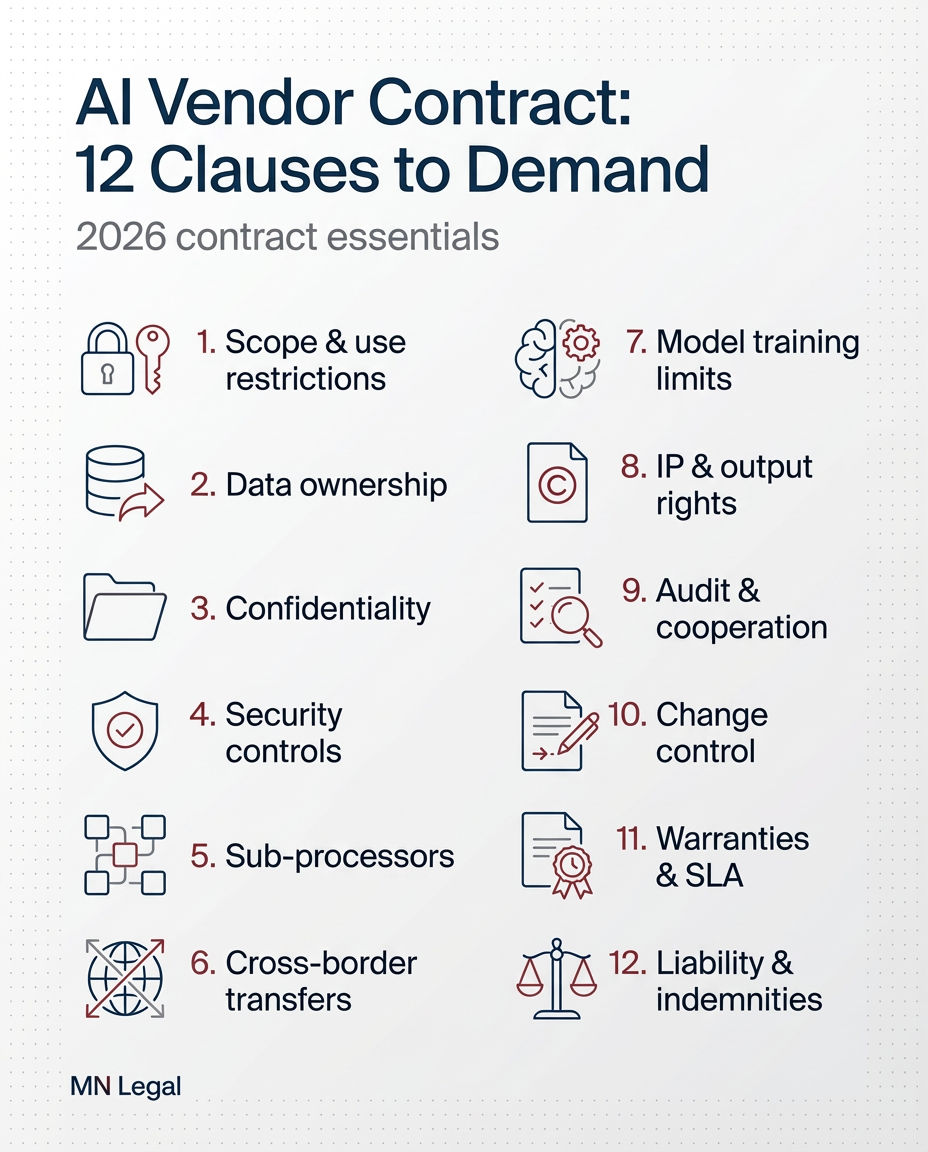

3. The 12 AI Vendor Contract Clauses to Demand in 2026

1) Data Use Restrictions

Limit processing strictly to service delivery. Avoid broad “business purposes” language that could expose your data to reuse you did not intend.

2) Training and Improvement: Opt-In, Not Default

Require an explicit opt-in before your data, prompts, or outputs are used to train or improve models. Without this, your confidential information could become part of a vendor’s training dataset.

3) Retention, Deletion, and Exit Obligations

Define retention periods, deletion timelines, and how deletion is confirmed after termination. Ensure you have audit rights to verify compliance.

4) Confidentiality Covering Prompts, Outputs, and Derived Data

Prompts can contain trade secrets and personal data. Outputs can create sensitive derivatives. Your contract must cover both explicitly.

5) Security Controls That Are Specific, Not Vague

Anchor security to concrete commitments: encryption standards, access controls, logging, and vulnerability management. Demand specifics, not general assurances.

6) Sub-Processor Controls and Change Notifications

Get an up-to-date sub-processor list, notice periods for changes, and a right to object where risk is high. Ensure flow-down obligations are in place.

7) Incident and Breach Notification Timelines

Define notice timelines and cooperation obligations so you can meet your own regulatory and client requirements after an incident.

8) Audit Rights and Reporting

Where full audits are not feasible, require structured alternatives: SOC2 or ISO reports, penetration test summaries, and security questionnaires. You need real visibility, not just promises.

9) Change Control for Material Model Updates

Require notice of material changes, transparency on impact, and exit or rollback rights where risk or performance materially changes. The model you signed up for may not be the one you are using next month.

10) IP and Output Rights

Clarify your rights to use outputs commercially, address restrictions, and ensure your inputs remain your property. Do not assume ownership without a clear contractual basis.

11) Warranties and Disclaimers

For critical use cases, avoid accepting “as-is” terms without meaningful commitments on security, performance, or compliance. Negotiate warranties that match your actual risk profile.

12) Liability Allocation That Matches Risk

Liability caps and exclusions should reflect the sensitivity of data processed and the impact of the use case. Consider tailored indemnities where appropriate.

For broader governance guidance, see the EDPB and UK ICO.

4. Case Example: SME Adopts an AI Support Tool

A growing services company implements an AI support assistant integrated into its helpdesk. Staff begin pasting screenshots into the tool to speed up ticket resolution. Those screenshots include customer IDs, account details, and internal notes.

A customer subsequently complains after receiving a response that reveals information that should not have been shared. No security breach occurred. The business now faces a confidentiality issue, a data protection question about what data was processed and under what terms, and commercial risk as clients begin asking for vendor due diligence evidence.

The first document everyone opens is the vendor agreement. What it says about data use, retention, training, security, incident notice, and cooperation determines how fast and how effectively the business can respond.

5. Common Mistakes Companies Make in AI Procurement

- Shadow procurement. Teams buy AI tools without legal or security review, so risk accumulates unnoticed.

- No AI use register. The business cannot state what AI tools are in use or what data they process.

- Assuming terms are non-negotiable. Many vendors negotiate, especially for business plans. Always ask.

- Ignoring cross-border processing. The tool stack is often global by default, creating transfer obligations that go unaddressed.

- Relying on staff care alone. Without clear policy, training, and technical restrictions, sensitive data will be entered into external tools.

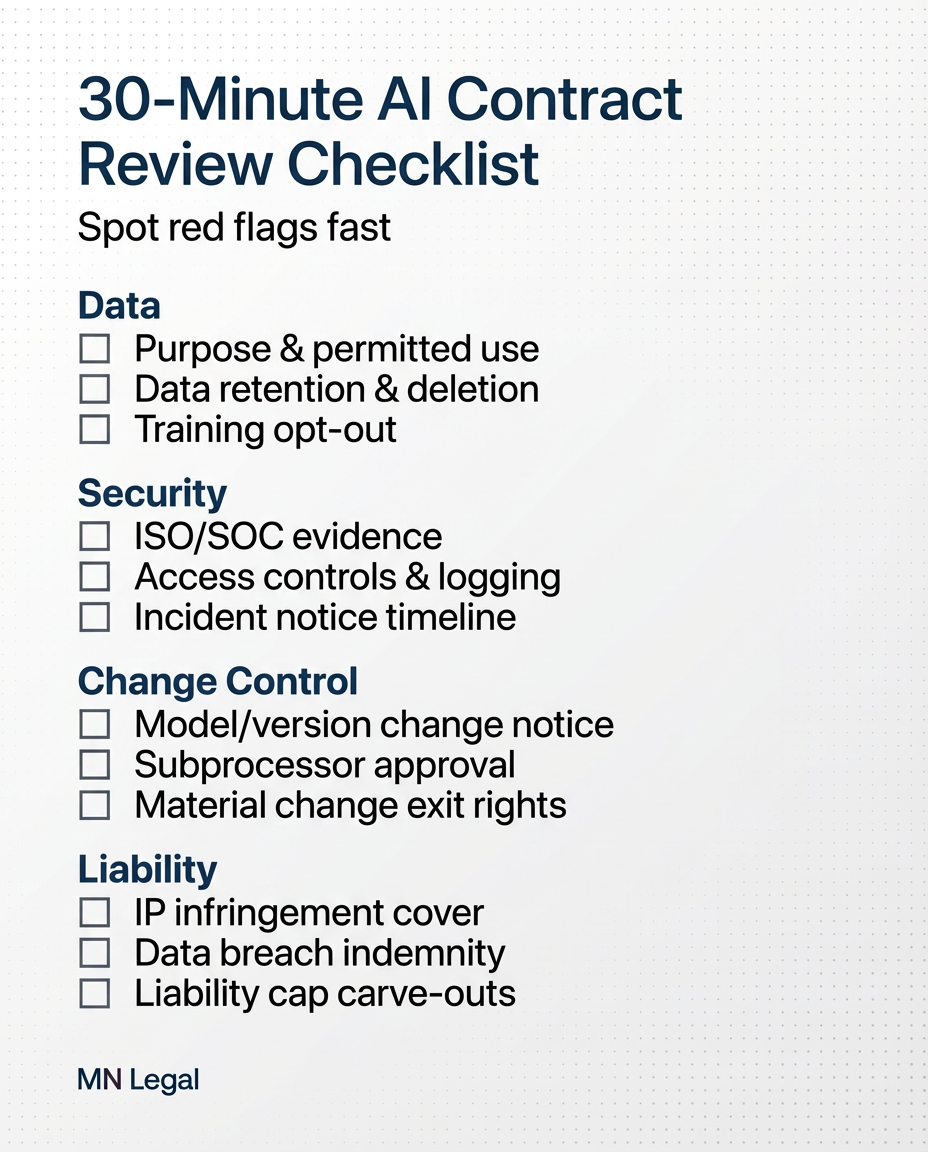

6. 30-Minute AI Vendor Contract Review Checklist

MN Legal supports organisations reviewing and negotiating AI vendor contracts and DPAs, mapping cross-border and vendor risk, drafting AI usage policies and governance frameworks, and advising on incident readiness where AI touches personal or confidential data.

Make an enquiry | Explore Practice Areas

7. What Businesses Should Do Next: 30-Day Plan

Week 1: Inventory and Ownership

- Create an AI use register: tool, owner, purpose, data types, vendor, and risk rating.

- Flag high-risk uses such as customer decisions, HR screening, and sensitive data processing.

Week 2: Procurement Controls

- Set a minimum contract standard covering DPA, security, change control, and incident notice.

- Define when legal and security sign-off is mandatory before a tool is adopted.

Week 3: Contract Cleanup

- Negotiate high-risk vendor terms or implement a contractual addendum.

- Document cross-border processing and sub-processors for critical tools.

Week 4: Training and Operational Rules

- Train teams on what data cannot be entered into external AI tools.

- Implement a practical escalation process for AI incidents such as harmful outputs or data exposure.

Frequently Asked Questions

Are AI vendor terms negotiable?

Often yes, especially for business and enterprise tiers. Where standard terms apply, use addenda to address data use, security, incident notice, audit rights, and change control.

Do we need a DPA when buying AI tools?

If the vendor processes personal data on your behalf, you typically need data processing terms covering purpose, security, sub-processors, international transfers, and deletion obligations.

What if the vendor changes the AI model after we sign?

Include a change control clause requiring notice of material changes, transparency on impact, and rights to pause, roll back, or terminate if risk or performance materially changes.

What is the biggest contractual risk in AI procurement?

Unrestricted data use including training on your content, unclear retention and deletion obligations, weak incident notification requirements, and liability caps that do not match the sensitivity of data or the use case.

How can MN Legal help with AI vendor contracts?

MN Legal helps businesses implement practical procurement controls and defensible vendor terms for AI tools, aligned with privacy, security, and commercial realities. If you are procuring AI tools this quarter, a scoped contract and risk review can prevent expensive rework later.

Disclaimer: This article is for general information only and does not constitute legal advice. Requirements vary by jurisdiction and specific facts. For advice on your organisation’s situation, contact MN Legal.